TRI Author: Simon Stent

All Authors: Xishuai Peng, YiLu Murphey, Simon Stent, Yuanxiang Li, Zihao Zhao

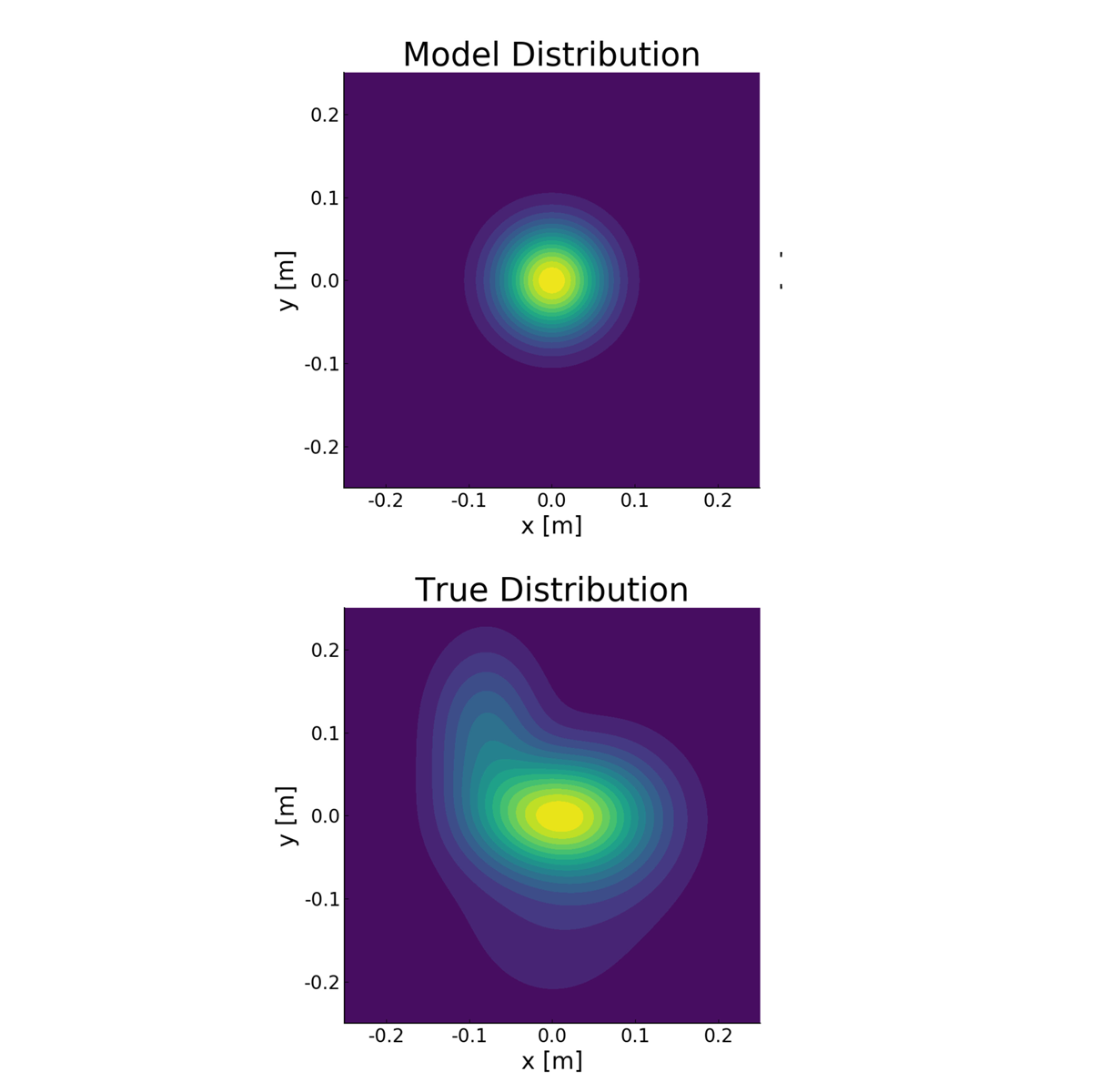

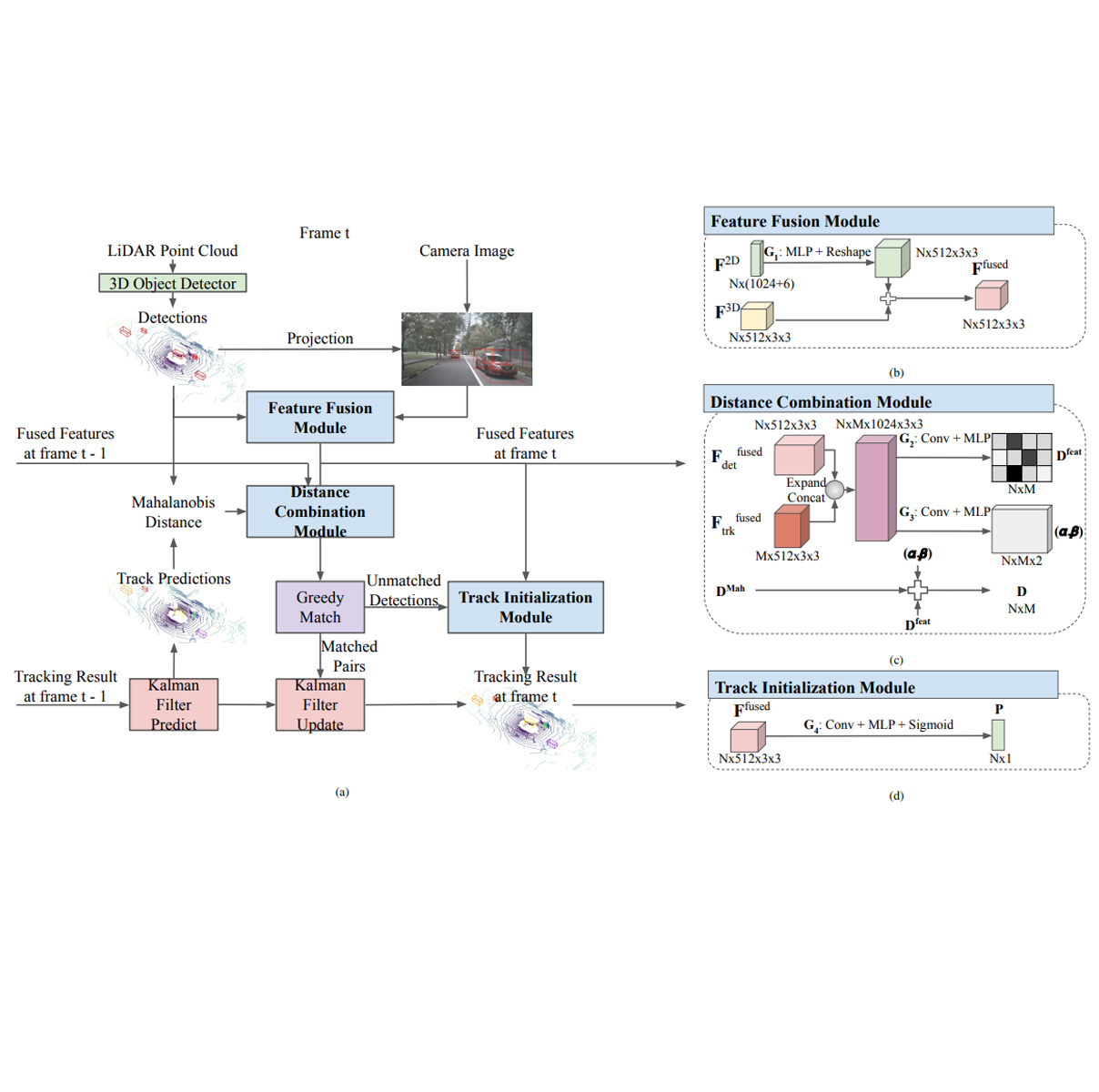

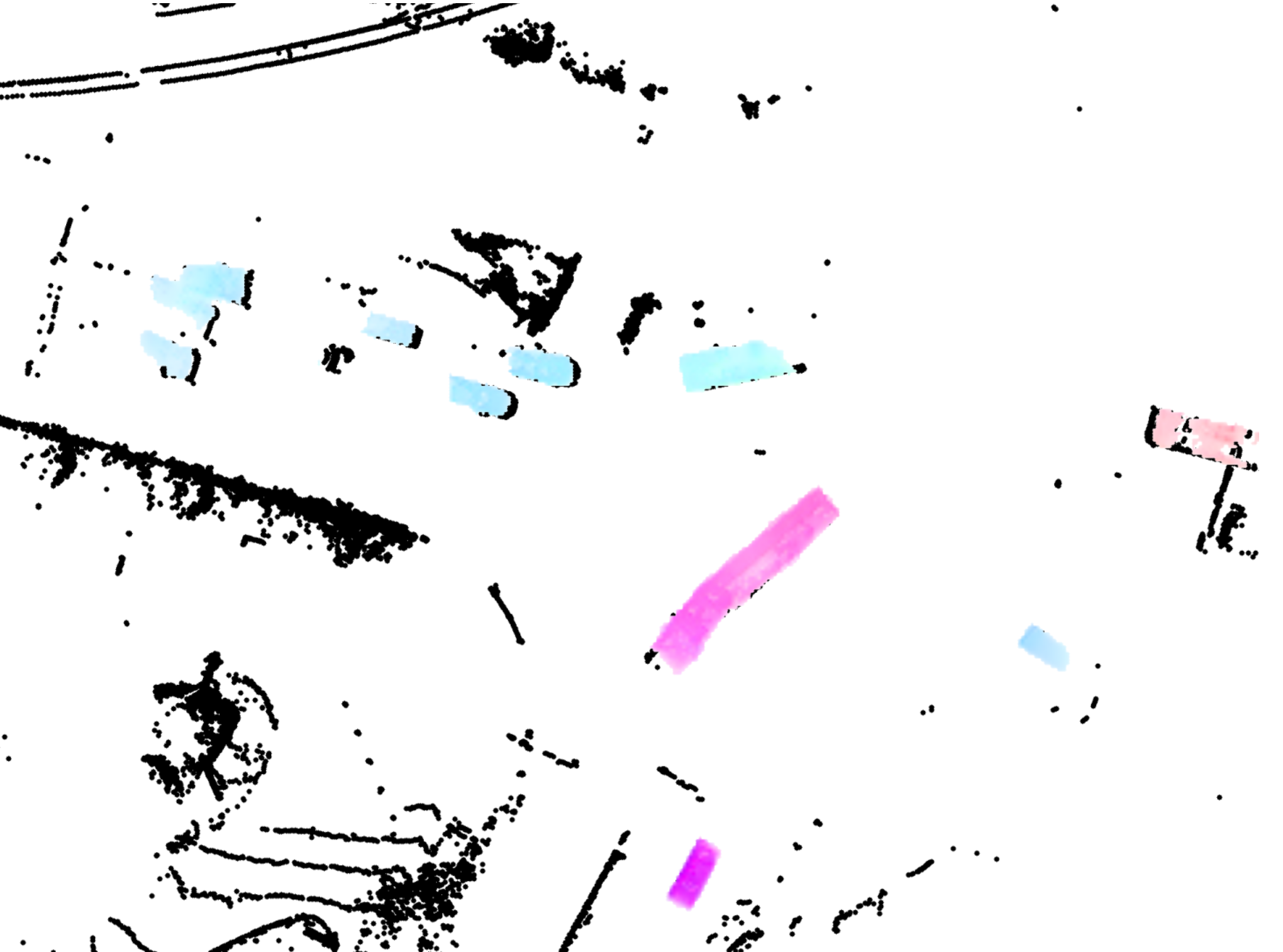

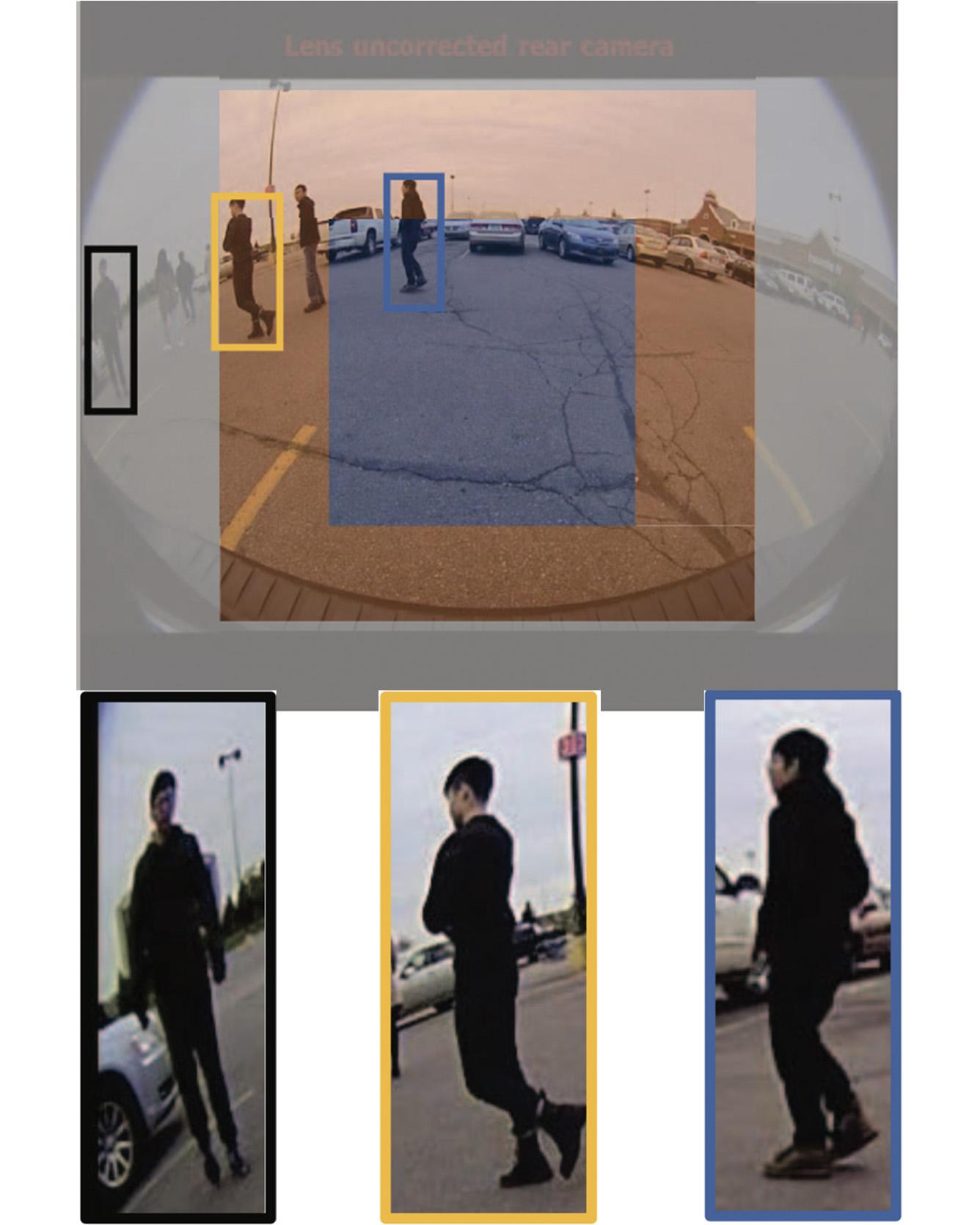

Objects in the periphery of fisheye images can become extremely distorted. This distortion can cause false positives and missed detections for automated object detection systems. This is problematic, not only for systems which have been trained on perspective images, but also for those that have been explicitly trained on fisheye data. In this paper we propose a new cost function for training object detectors on fisheye images. We model fisheye image distortion as an imbalanced domain problem and develop a domain association loss function to approach it with deep learning. We define separate domains based on the level of distortion within the image plane and propose a new objective function, inspired by the recently introduced focal loss for object detection, which we call a spatial focal loss. Our proposed loss incorporates a domain-modulating term which re-weights samples from different domains to encourage the learning of domain-invariant features. We implement spatial focal loss function in the YOLOv2 architecture and evaluate it on the task of pedestrian detection in a fisheye dataset captured by a 360 camera system mounted on a moving vehicle and labeled with over 11,000 pedestrian instances. Our experiments demonstrate that spatial focal loss can improve model performance in the highly distorted image periphery versus existing loss functions, including focal loss, without sacrificing performance in the less distorted image center, with no adaptations to network architectures required. By analyzing the locations of missed detections, we show further evidence that our loss function can improve the learning of domain-invariant features. Read More

Citation: Peng, Xishuai, Yi Murphey, Simon Stent, Yuanxiang Li, and Zihao Zhao. "Spatial focal loss for pedestrian detection in fisheye imagery." In 2019 IEEE Winter Conference on Applications of Computer Vision (WACV), pp. 561-569. IEEE, 2019.