Featured Publications

All Publications

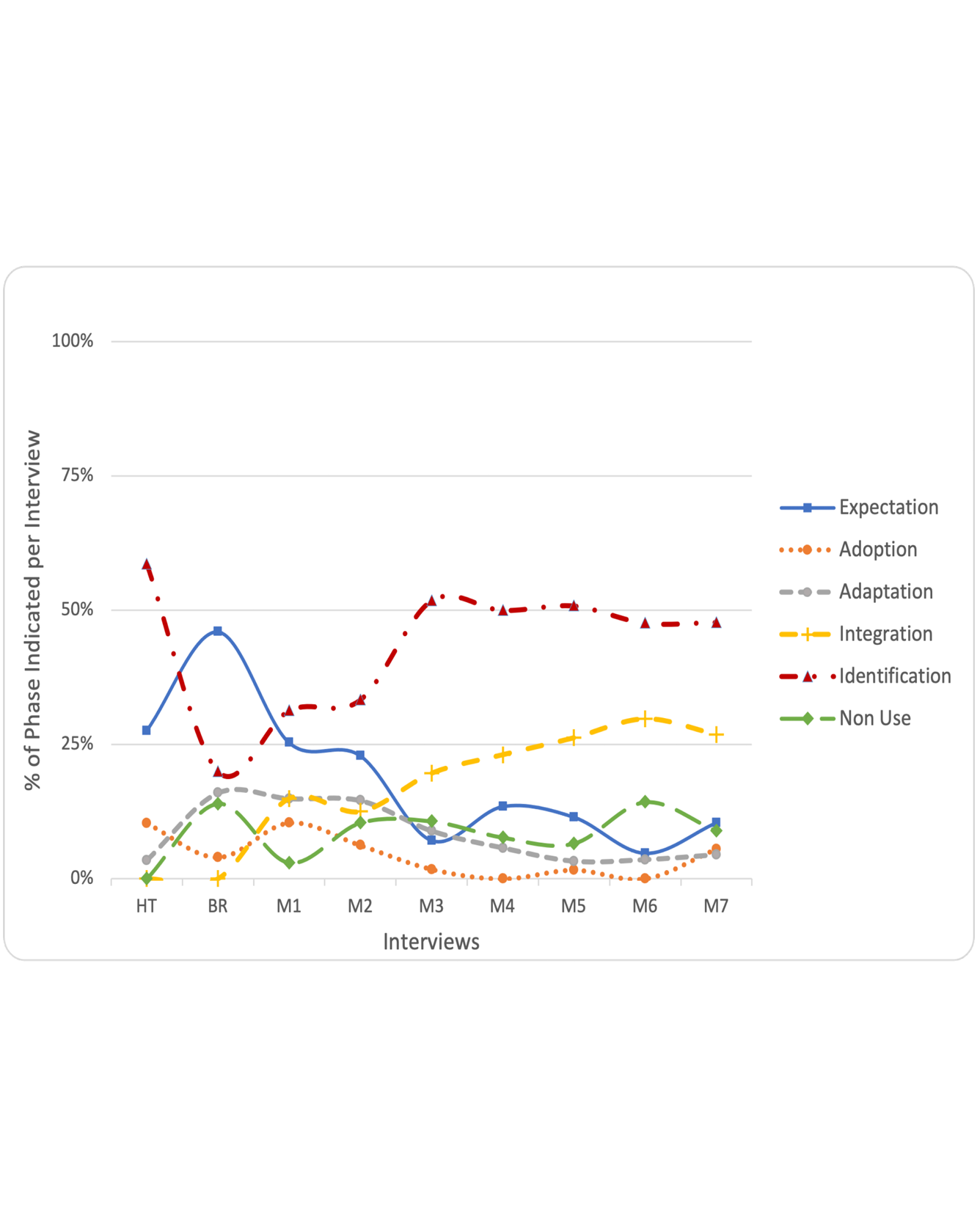

Loneliness has a direct impact on mental and physical health. This is especially relevant to older adults. In prior studies, socially isolated older adults wanted technology that would help them feel more physically present even across distances, such as telepresence robots. However, how useful this technology can be directly depends on whether people accept it over the long term. In this paper, we describe a case study in which we introduced telepresence robots into homes of older adults for seven months. We investigate how older adults’ progression through acceptance phases ebbed and flowed. We describe primary factors that affected speed of progression through acceptance phases: solving problems with technology, life situations (business vs. routines), and personality. We introduce example personas based on this case study. We also propose changes to the longitudinal technology-acceptance framework to take this more nuanced view into account. These outcomes will help future researchers and practitioners to better understand and influence longitudinal technology acceptance.

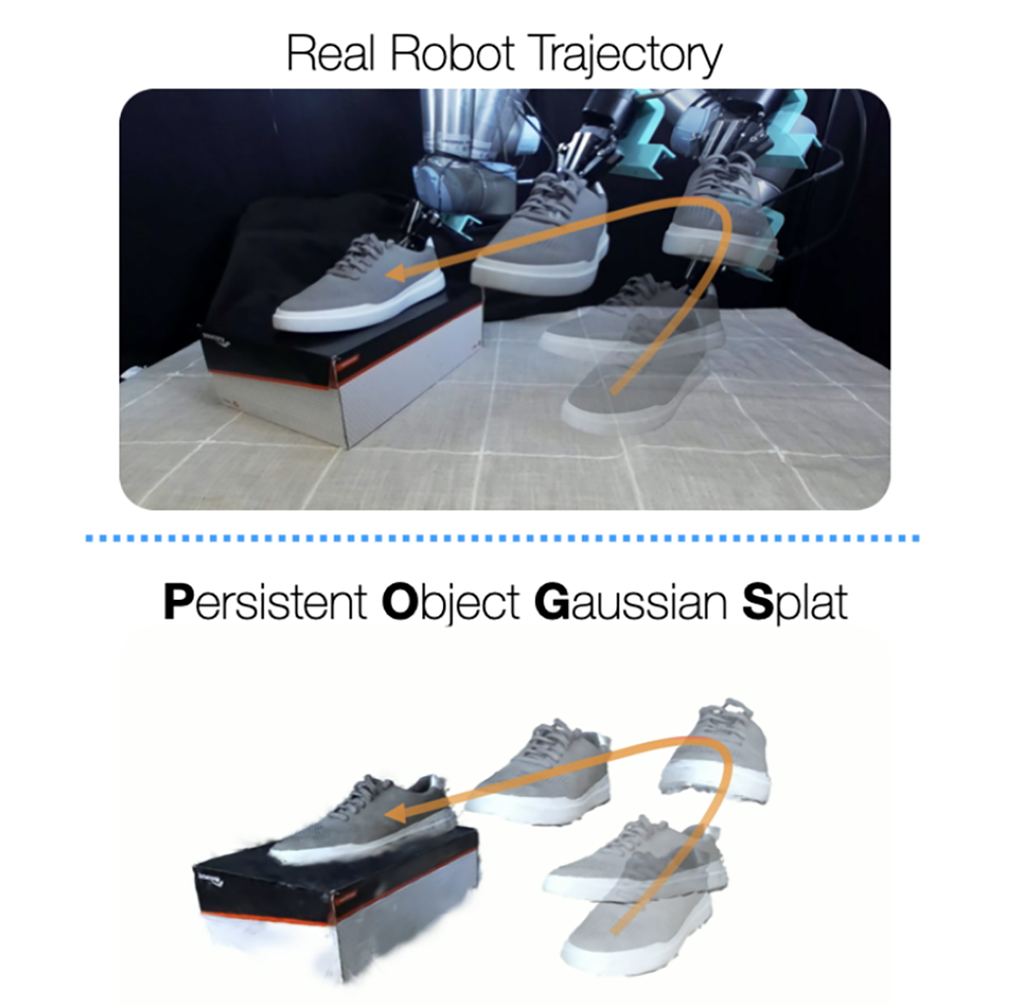

Tracking and manipulating irregularly-shaped, previously unseen objects in dynamic environments is important for robotic applications in manufacturing, assembly, and logistics. Recently introduced Gaussian Splats efficiently model object geometry, but lack persistent state estimation for task-oriented manipulation. We present Persistent Object Gaussian Splat (POGS), a system that embeds semantics, self-supervised visual features, and object grouping features into a compact representation that can be continuously updated to estimate the pose of scanned objects. POGS updates object states without requiring expensive rescanning or prior CAD models of objects. After an initial multi-view scene capture and training phase, POGS uses a single stereo camera to integrate depth estimates along with self-supervised vision encoder features for object pose estimation. POGS supports grasping, reorientation, and natural language-driven manipulation by refining object pose estimates, facilitating sequential object reset operations with human-induced object perturbations and tool servoing, where robots recover tool pose despite tool perturbations of up to 30°. POGS achieves up to 12 consecutive successful object resets and recovers from 80% of in-grasp tool perturbations. READ MORE

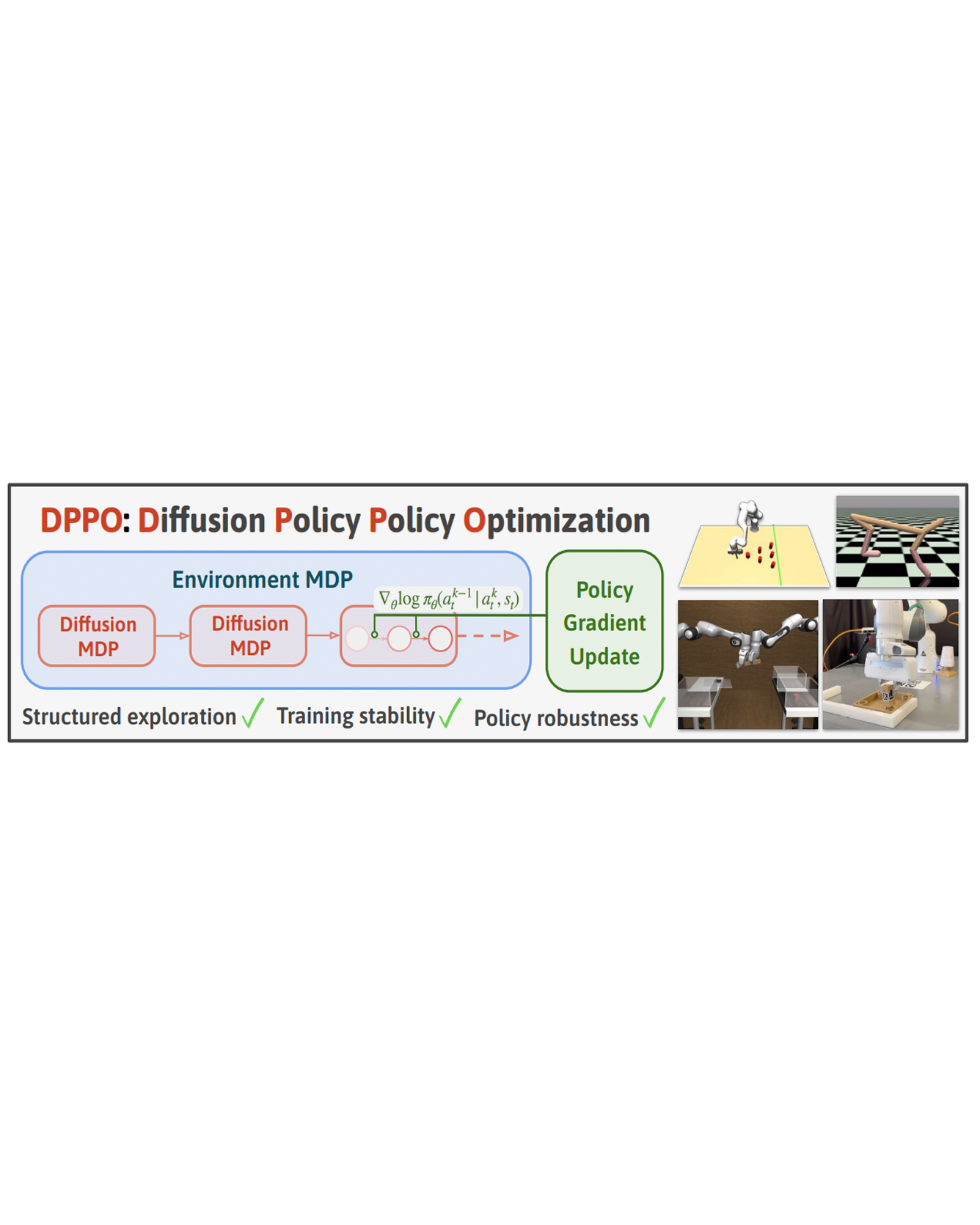

We introduce Diffusion Policy Policy Optimization, DPPO, an algorithmic framework including best practices for fine-tuning diffusion-based policies (e.g. Diffusion Policy) in continuous control and robot learning tasks using the policy gradient (PG) method from reinforcement learning (RL). PG methods are ubiquitous in training RL policies with other policy parameterizations; nevertheless, they had been conjectured to be less efficient for diffusion-based policies. Surprisingly, we show that DPPO achieves the strongest overall performance and efficiency for fine-tuning in common benchmarks compared to other RL methods for diffusion-based policies and also compared to PG fine-tuning of other policy parameterizations. Through experimental investigation, we find that DPPO takes advantage of unique synergies between RL fine-tuning and the diffusion parameterization, leading to structured and on-manifold exploration, stable training, and strong policy robustness. We further demonstrate the strengths of DPPO in a range of realistic settings, including simulated robotic tasks with pixel observations, and via zero-shot deployment of simulation-trained policies on robot hardware in a long-horizon, multi-stage manipulation task. Website with code: this http URL.

This work addresses the challenge of streamed video depth estimation, which expects not only per-frame accuracy but, more importantly, cross-frame consistency. We argue that sharing contextual information between frames or clips is pivotal in fostering temporal consistency. Thus, instead of directly developing a depth estimator from scratch, we reformulate this predictive task into a conditional generation problem to provide contextual information within a clip and across clips. Specifically, we propose a consistent context-aware training and inference strategy for arbitrarily long videos to provide cross-clip context. We sample independent noise levels for each frame within a clip during training while using a sliding window strategy and initializing overlapping frames with previously predicted frames without adding noise. Moreover, we design an effective training strategy to provide context within a clip. Extensive experimental results validate our design choices and demonstrate the superiority of our approach, dubbed ChronoDepth. Project page: this https URL. READ MORE

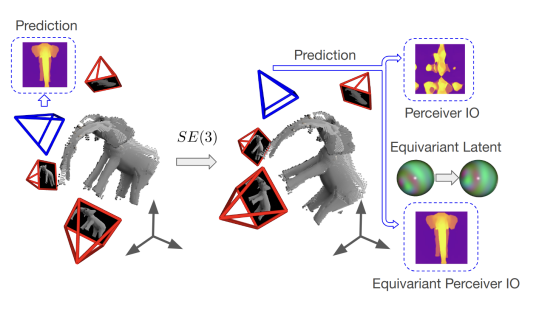

Incorporating inductive bias by embedding geometric entities (such as rays) as input has proven successful in multi-view learning. However, the methods adopting this technique typically lack equivariance, which is crucial for effective 3D learning. Equivariance serves as a valuable inductive prior, aiding in the generation of robust multi-view features for 3D scene understanding. In this paper, we explore the application of equivariant multi-view learning to depth estimation, not only recognizing its significance for computer vision and robotics but also addressing the limitations of previous research. Most prior studies have either overlooked equivariance in this setting or achieved only approximate equivariance through data augmentation, which often leads to inconsistencies across different reference frames. To address this issue, we propose to embed SE(3) equivariance into the Perceiver IO architecture. We employ Spherical Harmonics for positional encoding to ensure 3D rotation equivariance, and develop a specialized equivariant encoder and decoder within the Perceiver IO architecture. To validate our model, we applied it to the task of stereo depth estimation, achieving state of the art results on real-world datasets without explicit geometric constraints or extensive data augmentation.

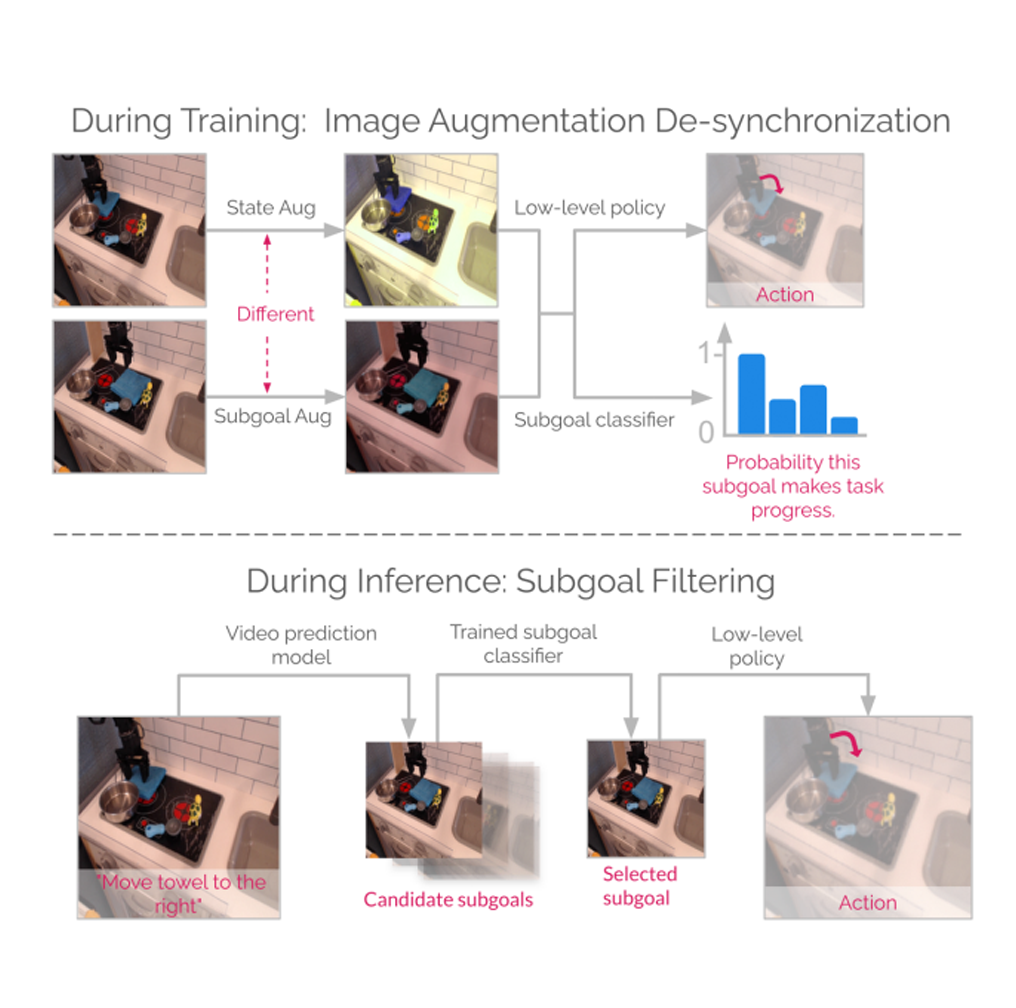

Image and video generative models that are pre-trained on Internet-scale data can greatly increase the generalization capacity of robot learning systems. These models can function as high-level planners, generating intermediate subgoals for low-level goal-conditioned policies to reach. However, the performance of these systems can be greatly bottlenecked by the interface between generative models and low-level controllers. For example, generative models may predict photorealistic yet physically infeasible frames that confuse low-level policies. Low-level policies may also be sensitive to subtle visual artifacts in generated goal images. This paper addresses these two facets of generalization, providing an interface to effectively "glue together" language-conditioned image or video prediction models with low-level goal-conditioned policies. Our method, Generative Hierarchical Imitation Learning-Glue (GHIL-Glue), filters out subgoals that do not lead to task progress and improves the robustness of goal-conditioned policies to generated subgoals with harmful visual artifacts. We find in extensive experiments in both simulated and real environments that GHIL-Glue achieves a 25% improvement across several hierarchical models that leverage generative subgoals, achieving a new state-of-the-art on the CALVIN simulation benchmark for policies using observations from a single RGB camera. GHIL-Glue also outperforms other generalist robot policies across 3/4 language-conditioned manipulation tasks testing zero-shot generalization in physical experiments. READ MORE

While 2D diffusion models generate realistic, high-detail images, 3D shape generation methods like Score Distillation Sampling (SDS) built on these 2D diffusion models produce cartoon-like, over-smoothed shapes. To help explain this discrepancy, we show that the image guidance used in Score Distillation can be understood as the velocity field of a 2D denoising generative process, up to the choice of a noise term. In particular, after a change of variables, SDS resembles a high-variance version of Denoising Diffusion Implicit Models (DDIM) with a differently-sampled noise term: SDS introduces noise i.i.d. randomly at each step, while DDIM infers it from the previous noise predictions. This excessive variance can lead to over-smoothing and unrealistic outputs. We show that a better noise approximation can be recovered by inverting DDIM in each SDS update step. This modification makes SDS's generative process for 2D images almost identical to DDIM. In 3D, it removes over-smoothing, preserves higher-frequency detail, and brings the generation quality closer to that of 2D samplers. Experimentally, our method achieves better or similar 3D generation quality compared to other state-of-the-art Score Distillation methods, all without training additional neural networks or multi-view supervision, and providing useful insights into relationship between 2D and 3D asset generation with diffusion models. READ MORE

Physics-based simulation is essential for developing and evaluating robot manipulation policies, particularly in scenarios involving deformable objects and complex contact interactions. However, existing simulators often struggle to balance computational efficiency with numerical accuracy, especially when modeling deformable materials with frictional contact constraints. We introduce an efficient subspace representation for the Incremental Potential Contact (IPC) method, leveraging model reduction to decrease the number of degrees of freedom. Our approach decouples simulation complexity from the resolution of the input model by representing elasticity in a low-resolution subspace while maintaining collision constraints on an embedded high-resolution surface. Our barrier formulation ensures intersection-free trajectories and configurations regardless of material stiffness, time step size, or contact severity. We validate our simulator through quantitative experiments with a soft bubble gripper grasping and qualitative demonstrations of placing a plate on a dish rack. The results demonstrate our simulator’s efficiency, physical accuracy, computational stability, and robust handling of frictional contact, making it well-suited for generating demonstration data and evaluating downstream robot training applications. More details and supplementary material are on the website: https://sites.google.com/view/embedded-ipc. READ MORE

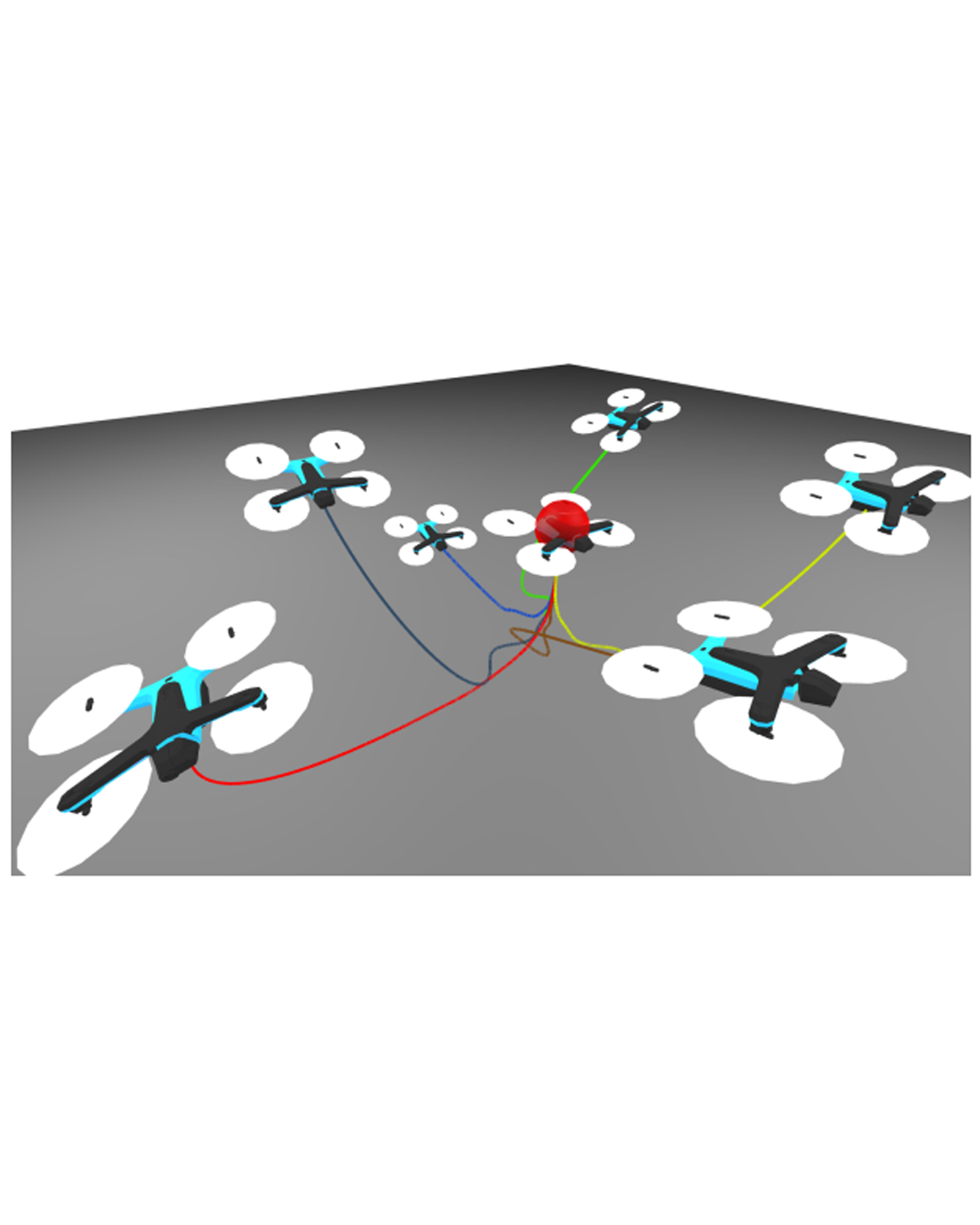

Safety and stability are essential properties of control systems. Control Barrier Functions (CBFs) and Control Lyapunov Functions (CLFs) are powerful tools to ensure safety and stability respectively. However, previous approaches typically verify and synthesize the CBFs and CLFs separately, satisfying their respective constraints, without proving that the CBFs and CLFs are compatible with each other, namely at every state, there exists control actions within the input limits that satisfy both the CBF and CLF constraints simultaneously. Ignoring the compatibility criteria might cause the CLF-CBFQP controller to fail at runtime. There exists some recent works that synthesized compatible CLF and CBF, but relying on nominal polynomial or rational controllers, which is just a sufficient but not necessary condition for compatibility. In this work, we investigate verification and synthesis of compatible CBF and CLF independent from any nominal controllers. We derive exact necessary and sufficient conditions for compatibility, and further formulate Sum-Of-Squares programs for the compatibility verification. Based on our verification framework, we also design a nominal-controller-free synthesis method, which can effectively expands the compatible region, in which the system is guaranteed to be both safe and stable. We evaluate our method on a non-linear toy problem, and also a 3D quadrotor to demonstrate its scalability. The code is open-sourced at https://github.com/hongkai-dai/compatible_clf_cbf. READ MORE

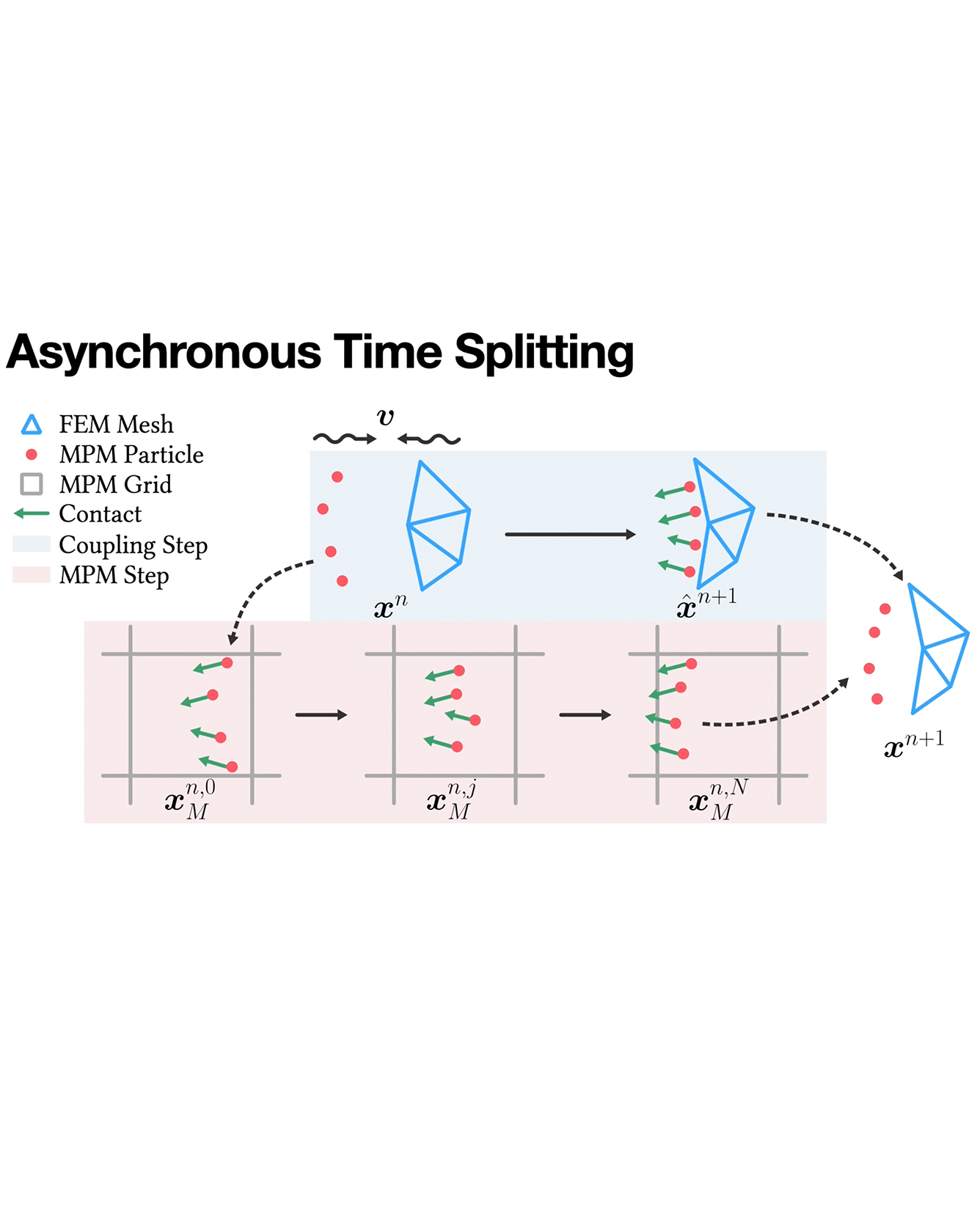

This paper presents a novel method to couple Finite Element Methods (FEM), typically employed for modeling Lagrangian solids such as flesh, cloth, hair, and rigid bodies, with Material Point Methods (MPM), which are well-suited for simulating materials undergoing substantial deformation and topology change, including Newtonian/non-Newtonian fluid, granular materials, and fracturing materials. The challenge of coupling these diverse methods arises from their contrasting computational needs: implicit FEM integration is often favored to enjoy stability and large timesteps, while explicit MPM integration benefits from its allowance for efficient GPU optimization and flexibility of applying different plasticity models, which only allows for moderate timesteps. To bridge this gap, a mixed implicit-explicit time integration (IMEX) approach is proposed, utilizing principles from time splitting for partial differential equations and optimization-based time integrators. This method adopts incremental potential contact (IPC) to define a variational frictional contact model between the two materials, serving as the primary coupling mechanism. Our method enables implicit FEM and explicit MPM to coexist with significantly different timestep sizes while preserving two-way coupling. Experimental results demonstrate the potential of our method as a strong foundation for future exploration and enhancement in the field of multi-material simulation. READ MORE